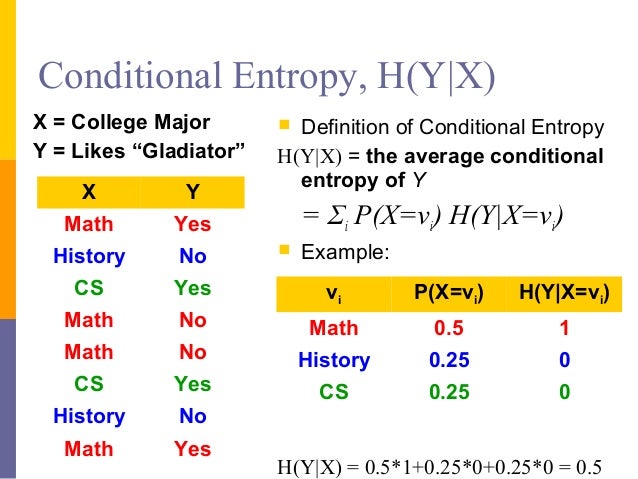

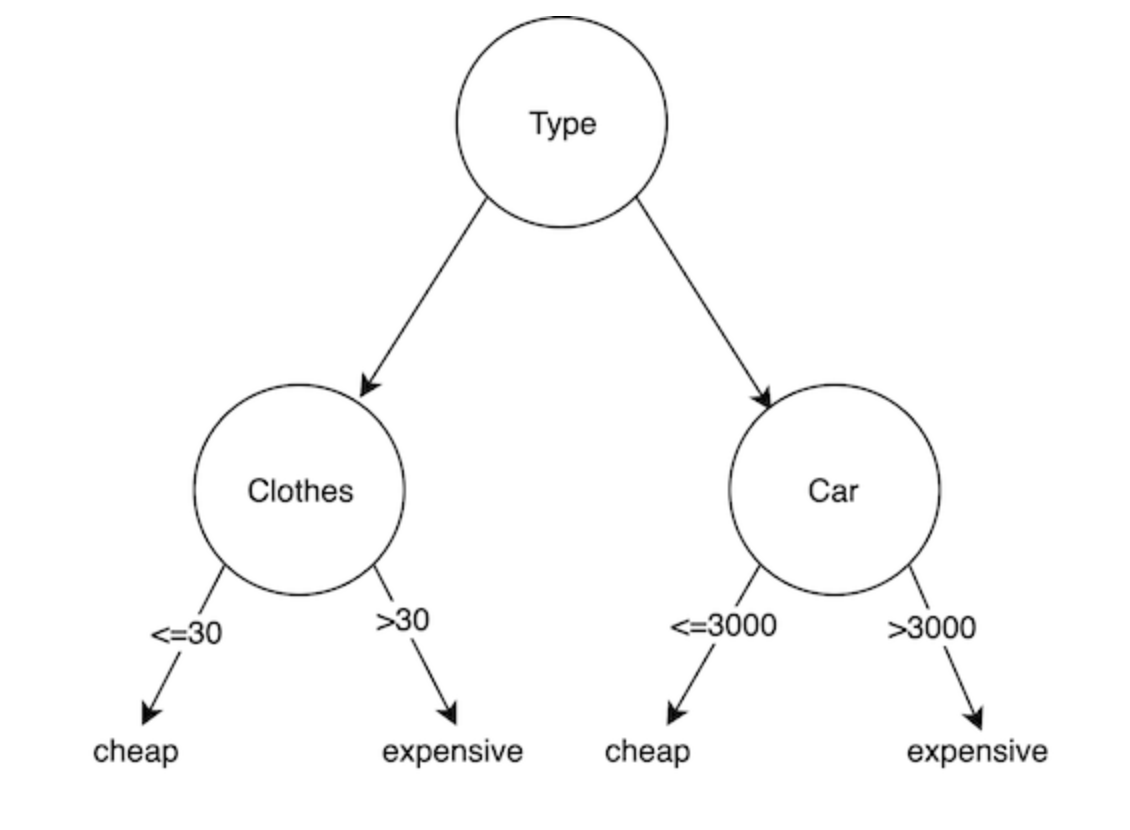

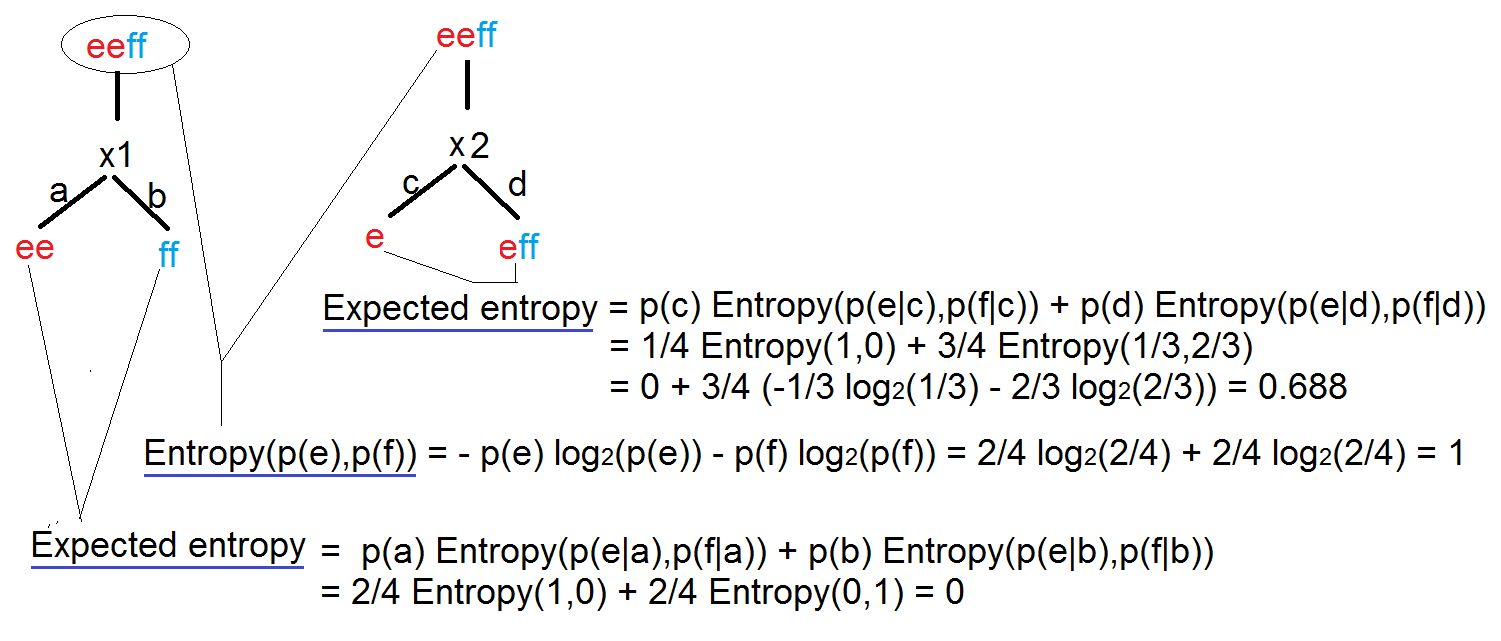

DONE - Congratulations you have found the answers to your questions Show query instances to the tree and run down the tree until we arrive at leaf nodesĥ. Grow the tree until we accomplish a stopping criteria -> create leaf nodes which represent the predictions we want to make for new query instancesĤ. Train the decision tree model by continuously splitting the target feature along the values of the descriptive features using a measure of information gain during the training processģ. Present a dataset containing of a number of training instances characterized by a number of descriptive features and a target featureĢ. In simplified terms, the process of training a decision tree and predicting the target features of query instances is as follows:ġ. This is possible since the model has kind of learned the underlying structure of the training data and hence can, given some assumptions, make predictions about the target feature value (class) of unseen query instances.Ī decision tree mainly contains of a root node, interior nodes, and leaf nodes which are then connected by branches.ĭecision trees are further subdivided whether the target feature is continuously scaled like for instance house prices or categorically scaled like for instance animal species. The leaf nodes contain the predictions we will make for new query instances presented to our trained model. This process of finding the "most informative" feature is done until we accomplish a stopping criteria where we then finally end up in so called leaf nodes. The main idea of decision trees is to find those descriptive features which contain the most "information" regarding the target feature and then split the dataset along the values of these features such that the target feature values for the resulting sub_datasets are as pure as possible -> The descriptive feature which leaves the target feature most purely is said to be the most informative one.

We can use decision trees for issues where we have continuous but also categorical input and target features. Expectation Maximization and Gaussian Mixture Models (GMM)ĭecision trees are supervised learning algorithms used for both, classification and regression tasks where we will concentrate on classification in this first part of our decision tree tutorial.ĭecision trees are assigned to the information based learning algorithms which use different measures of information gain for learning.Principal Component Analysis (PCA) in Python.Natural Language Processing: Classification.Natural Language Processing with Python.A Neural Network for the Digits Dataset.Neural Networks, Structure, Weights and Matrices.A Simple Neural Network from Scratch in Python.k-Nearest-Neighbor Classifier with sklearn.

k-Nearest Neighbor Classifier in Python.Train and Test Sets by Splitting Learn and Test Data.Data Representation and Visualization of Data.Instructor-led training courses by Bernd Klein Live Python classes by highly experienced instructors:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed